|

Zirui Zhu I am a PhD candidate in Computer Science at the National University of Singapore, where I am fortunate to be advised by Prof. Yang You. Before that, I received my B.Eng. in Electronics Engineering from Tsinghua University in 2022, where I was grateful to work with Prof. Yong Li and Prof. Xu Chen. Research Interests: I develop structure-aware, resource-efficient methods for foundation models. My research studies how to exploit heterogeneity in data, inputs, and optimization to allocate limited compute, tokens, supervision, and model updates where they matter most. Recent work spans budgeted long-video understanding, confidence-gated reward modeling, and efficient LLM post-training. I am open to research collaborations from both industry and academia. I am also seeking Summer 2026 research internships with the possibility of full-time conversion—feel free to reach out. |

|

News

|

Experience

|

PublicationsRepresentative papers are highlighted. |

|

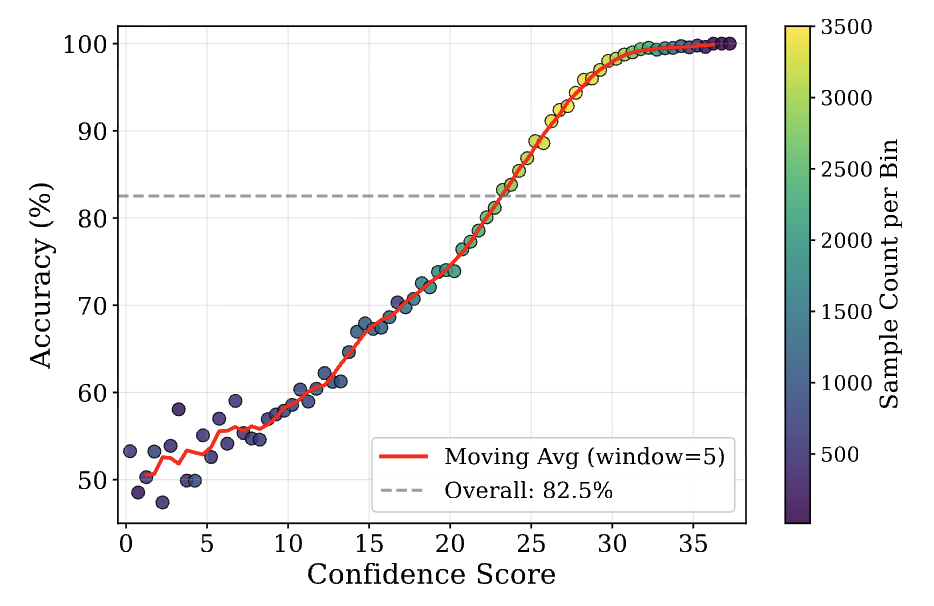

CAMEL: Confidence-Gated Reflection for Reward Modeling

Zirui Zhu, Hailun Xu, Yang Luo, Yong Liu, Kanchan Sarkar, Kun Xu, Yang You ICML, 2026 paper |

|

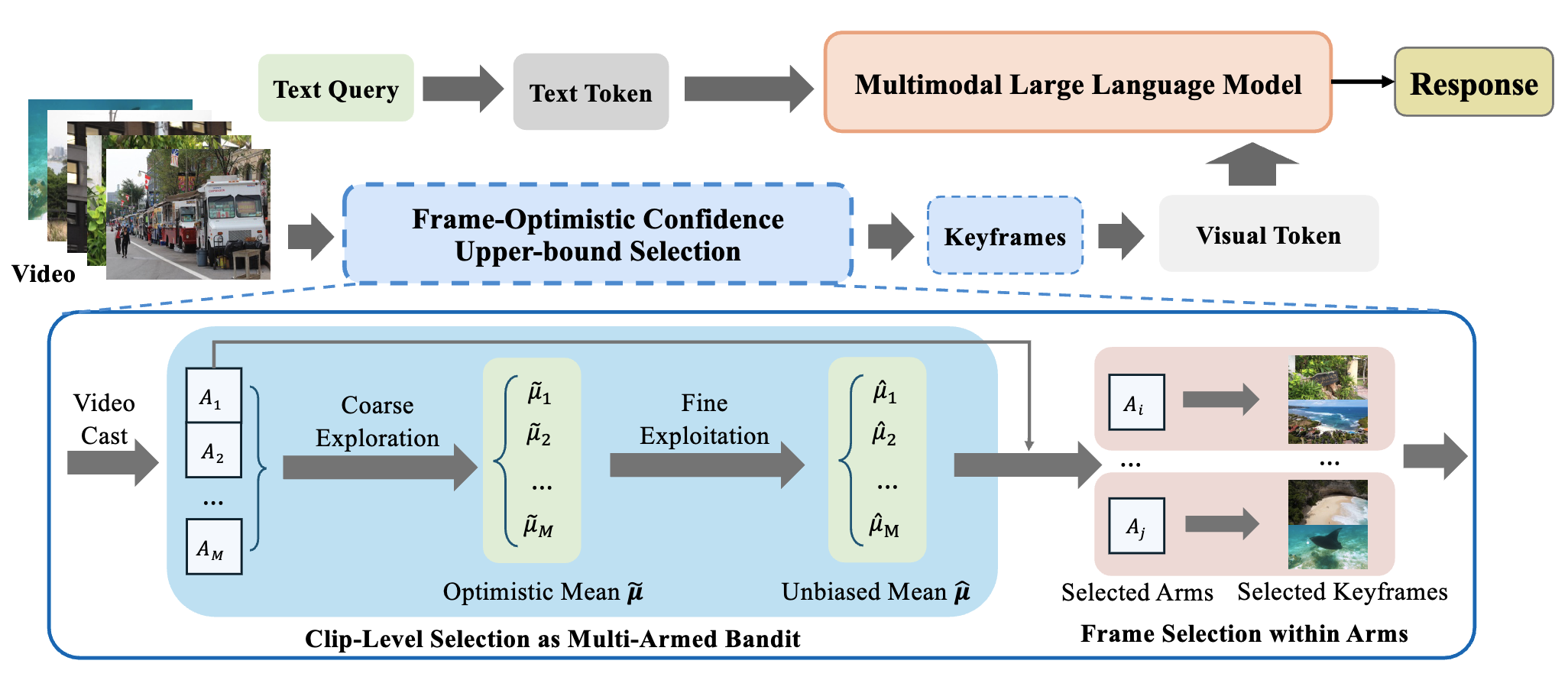

FOCUS: Efficient Keyframe Selection for Long Video Understanding

Zirui Zhu, Hailun Xu, Yang Luo, Yong Liu, Kanchan Sarkar, Zhenheng Yang, Yang You ICLR, 2026 paper / code |

|

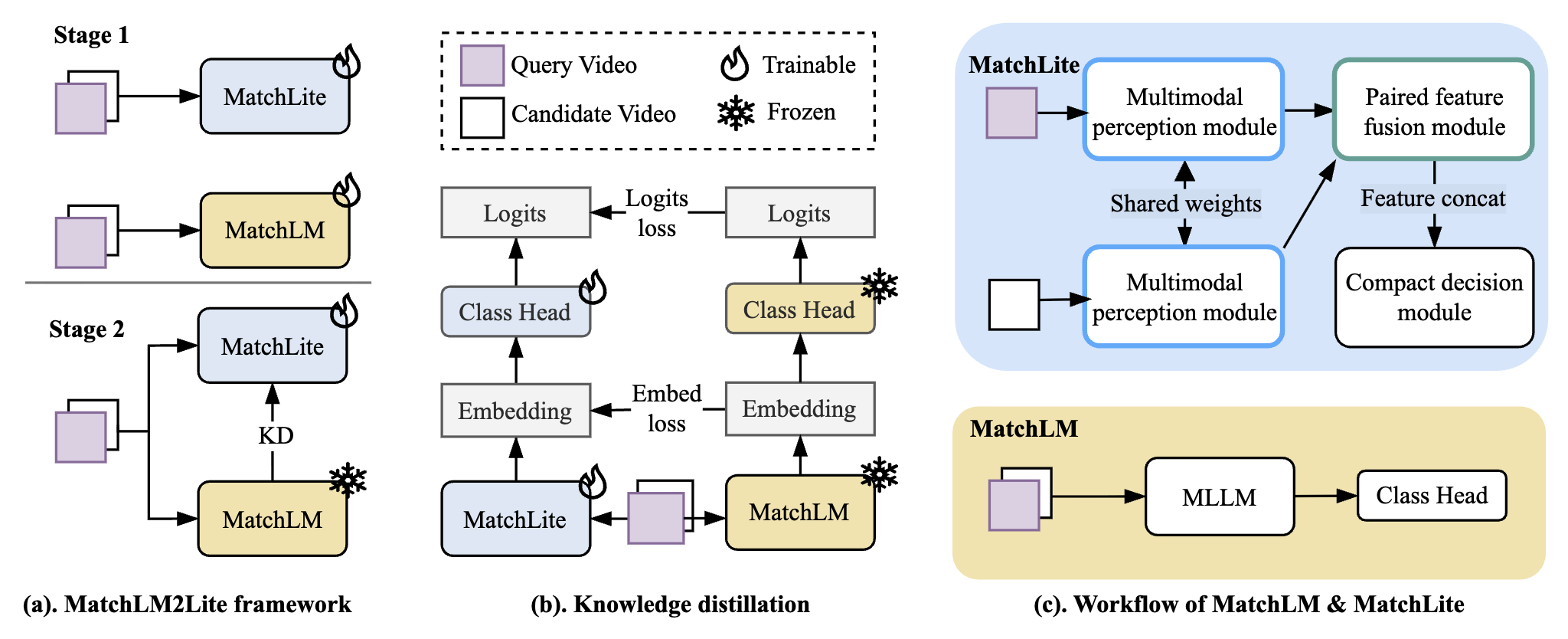

MatchLM2Lite: A Scalable MLLM-to-Lite Framework for Reproduced Content Identification

Xiaotian Fan, Hiok Hian Ong, David Yuchen Wang, Zirui Zhu, Kanchan Sarkar, Kun Xu KDD, 2026 paper |

|

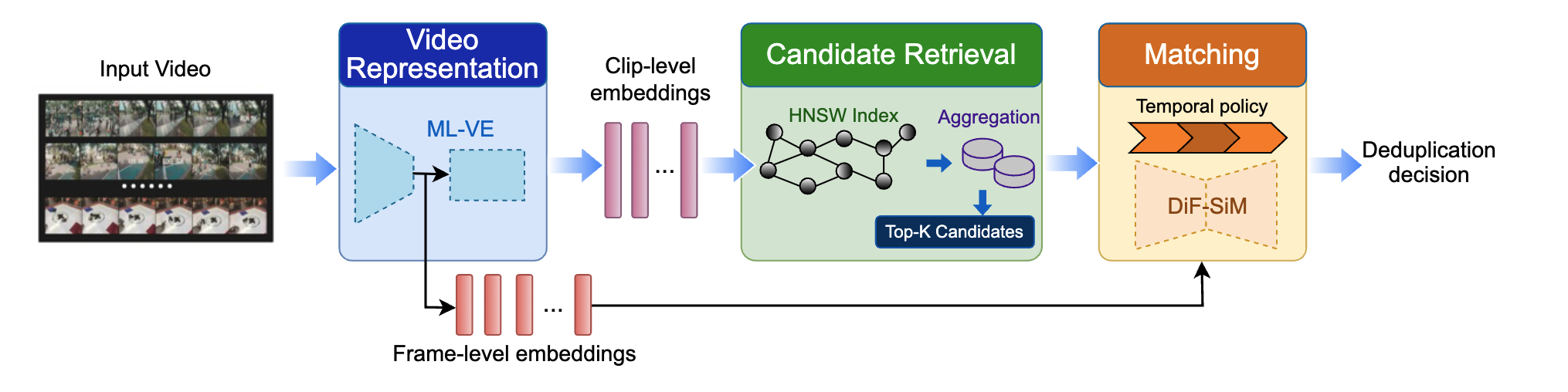

MLT-Dedup: Efficient Large-Scale Online Video Deduplication via Multi-Level Representations and Spatial-Temporal Matching

David Yuchen Wang, Haoying Li, Hailun Xu, Wei Chee Yew, Zirui Zhu, Sanjay Saha, Hei Hao, Kanchan Sarkar, Kun Xu KDD, 2026 paper |

|

SeedFT: Structure-Preserving Fusion for Multi-Seed LLM Fine-Tuning

Yong Liu, Di Fu, Hailun Xu, Yang Luo, Zirui Zhu, Kanchan Sarkar, Zhenheng Yang, Minhao Cheng, Cho-Jui Hsieh, Yang You Preprint, 2025 paper |

|

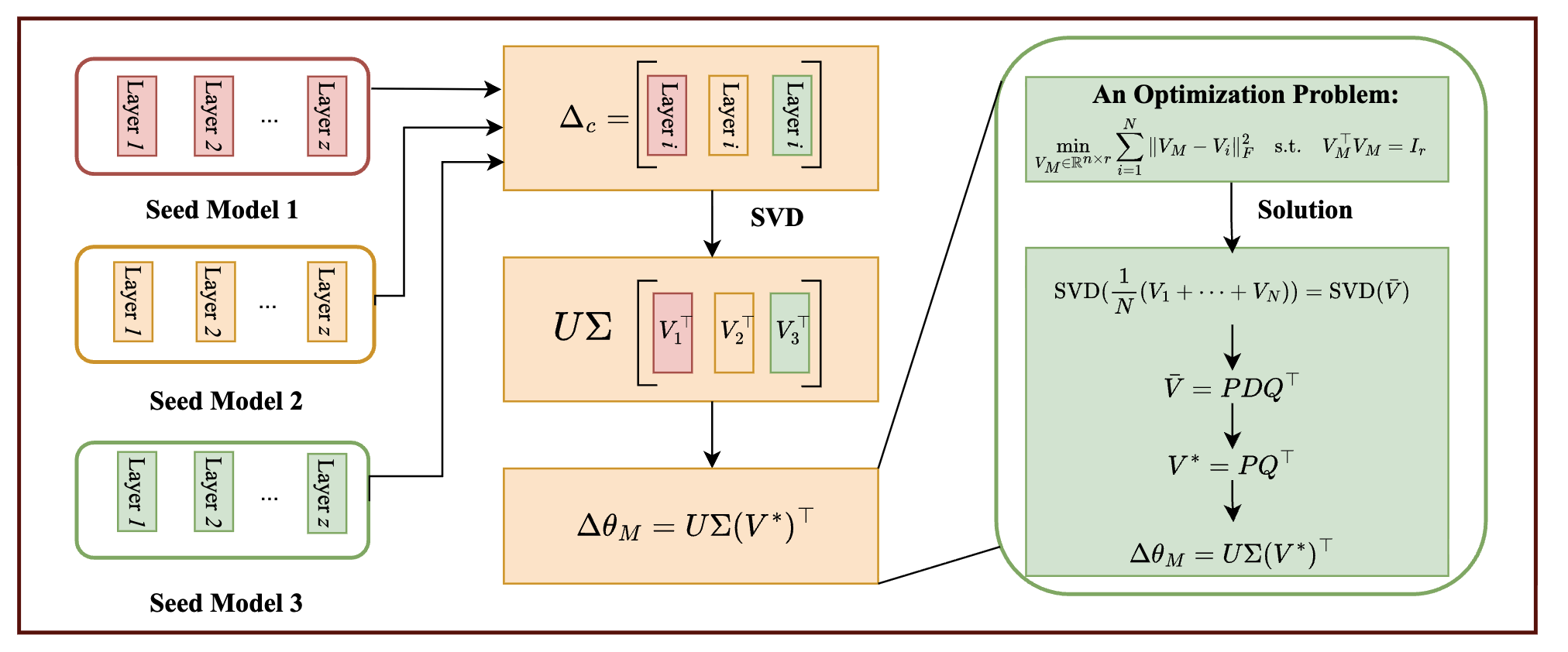

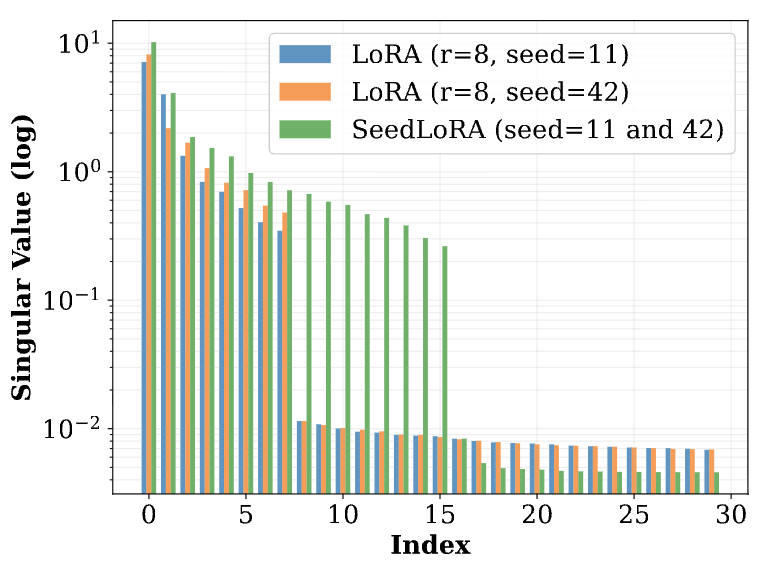

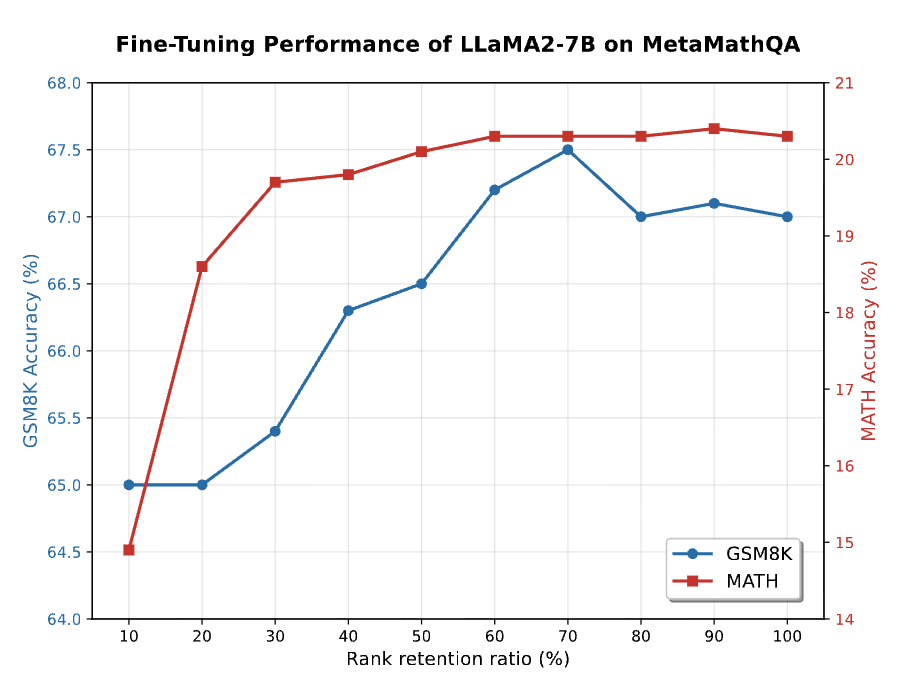

SeedLoRA: A Fusion Approach to Efficient LLM Fine-Tuning

Yong Liu, Di Fu, Shenggan Cheng, Zirui Zhu, Yang Luo, Minhao Cheng, Cho-Jui Hsieh, Yang You ICML, 2025 paper |

|

POME: Post Optimization Model Edit via Muon-style Projection

Yong Liu, Di Fu, Yang Luo, Zirui Zhu, Minhao Cheng, Cho-Jui Hsieh, Yang You arXiv, 2025 paper / code |

|

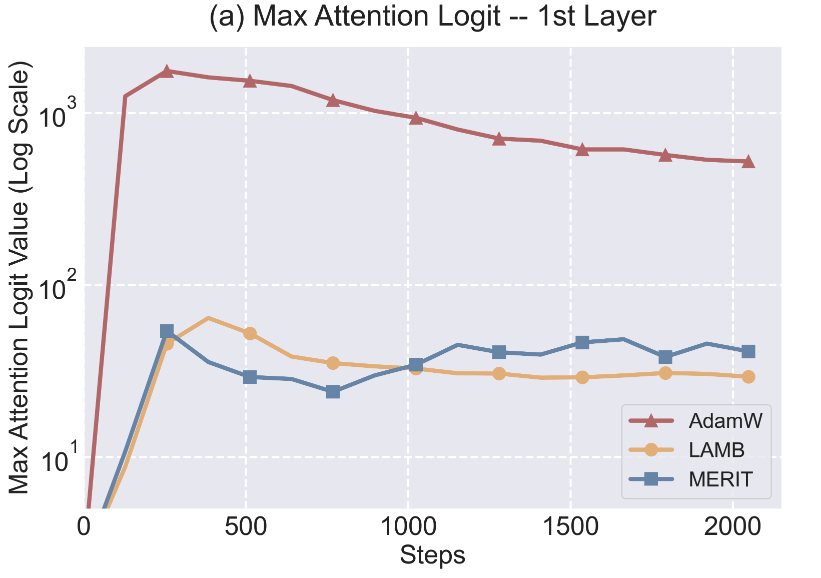

MERIT: Maximum-normalized Element-wise Ratio for Language Model Large-batch Training

Yang Luo, Zangwei Zheng, Ziheng Qin, Zirui Zhu, Yong Liu, Yang You ICML, 2025 paper / code |

|

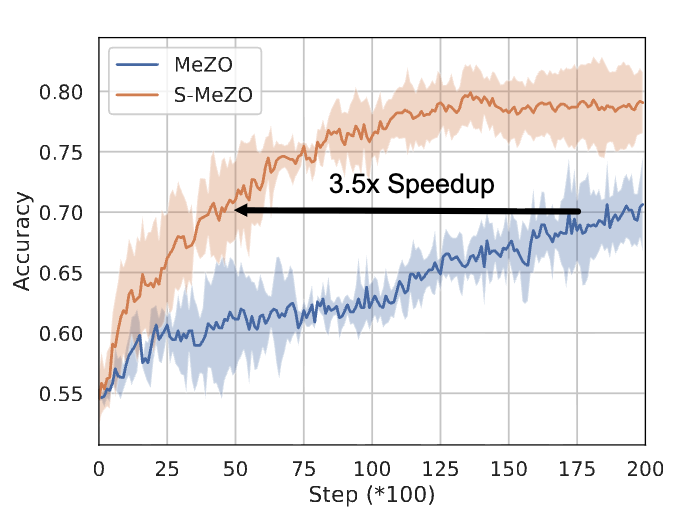

Sparse MeZO: Less Parameters for Better Performance in Zeroth-Order LLM Fine-Tuning

Yong Liu, Zirui Zhu, Chaoyu Gong, Minhao Cheng, Cho-Jui Hsieh, Yang You NeurIPS, 2025 paper / code |

|

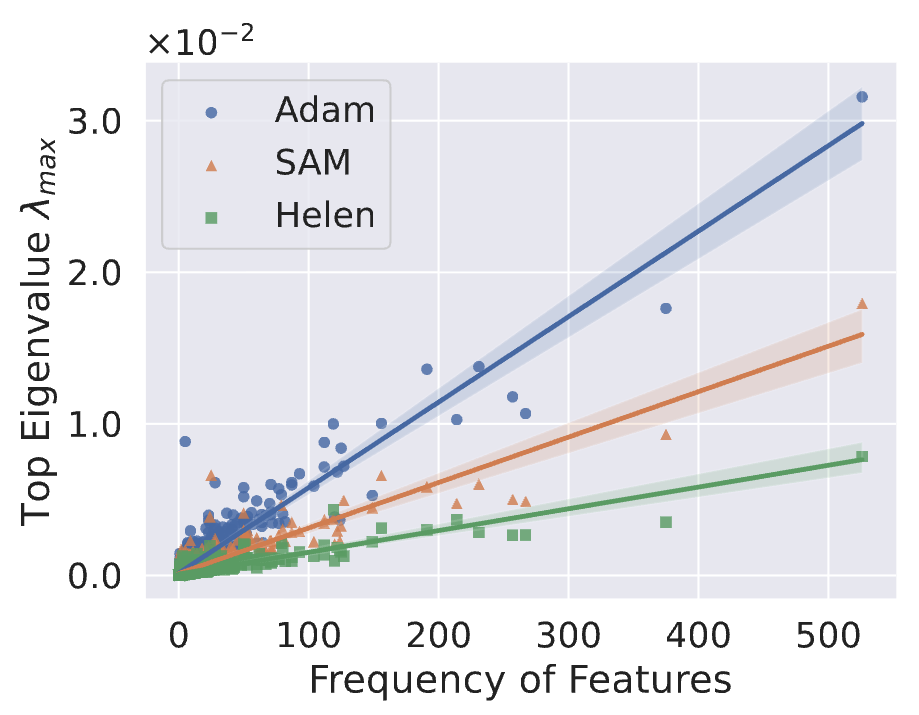

Helen: Optimizing CTR Prediction Models with Frequency-wise Hessian Eigenvalue Regularization

Zirui Zhu, Yong Liu, Zangwei Zheng, Huifeng Guo, Yang You WWW, 2024 paper / code |

|

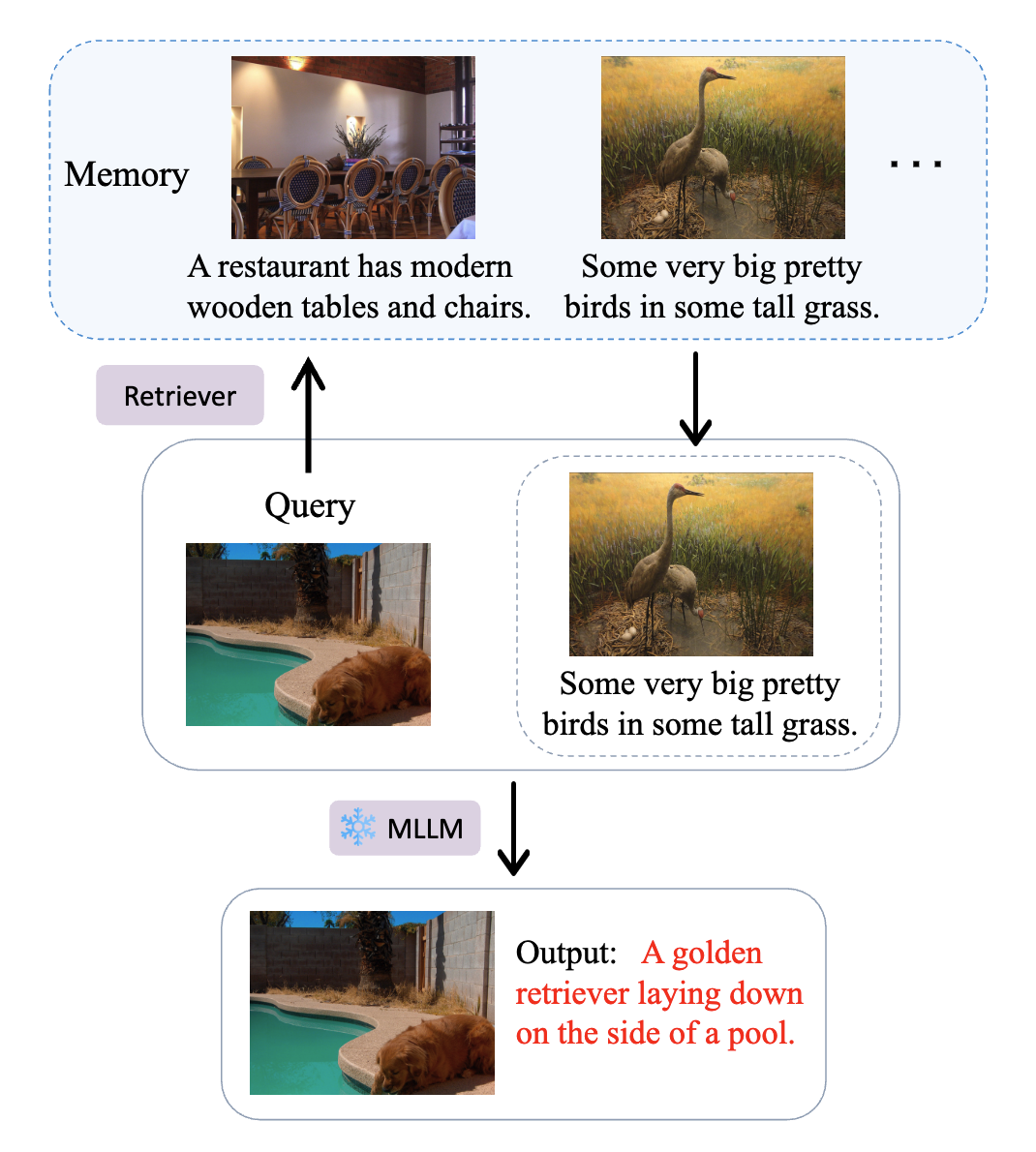

How Does the Textual Information Affect the Retrieval of Multimodal In-Context Learning?

Yang Luo, Zangwei Zheng, Zirui Zhu, Yang You EMNLP, 2024 paper / code |

|

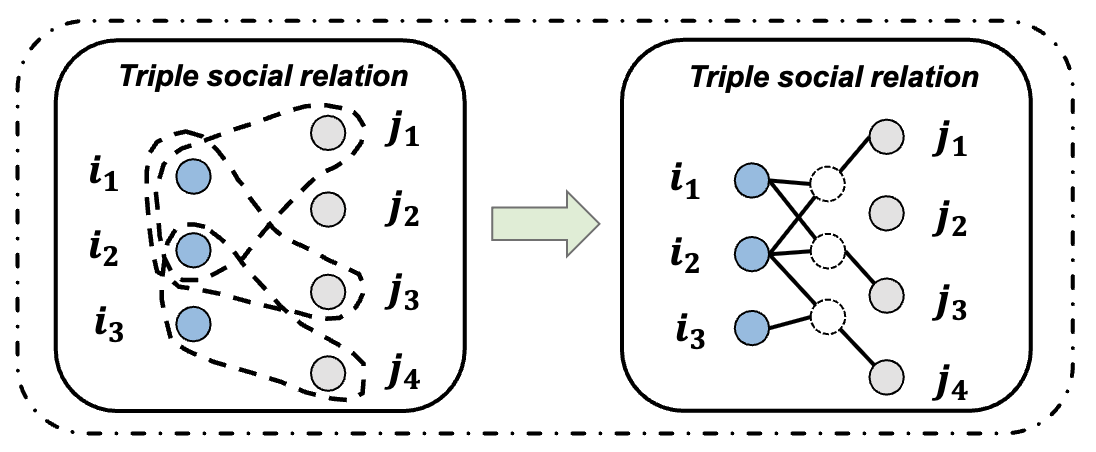

Inhomogeneous Social Recommendation with Hyper-graph Convolutional Networks

Zirui Zhu, Chen Gao, Xu Chen, Nian Li, Depeng Jin, Yong Li ICDE, 2022 paper / code |

|

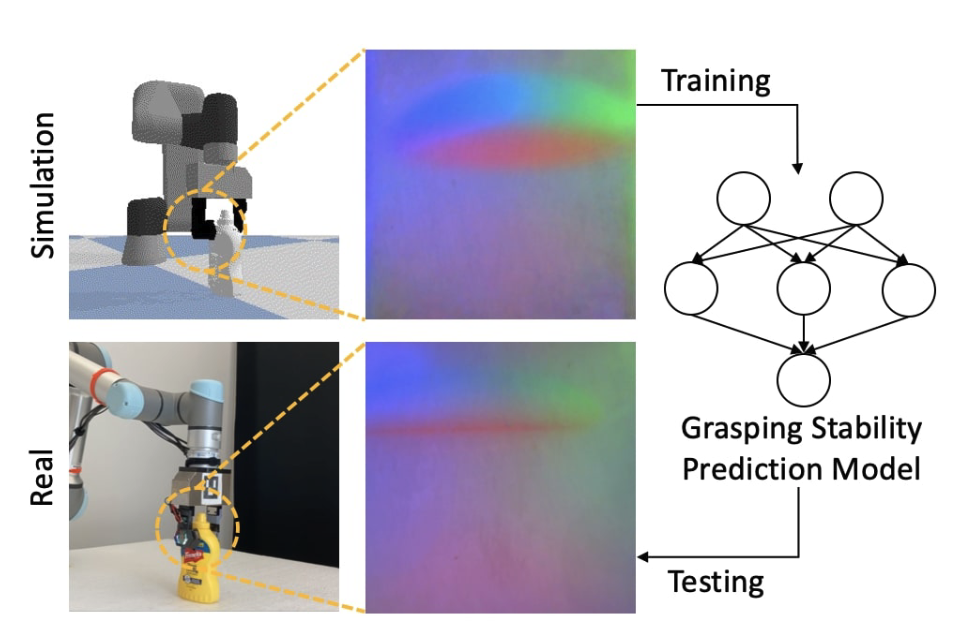

Predicting Grasp Stability with Sim2Real Transfer from Tactile Sensing

Zilin Si, Zirui Zhu, Arpit Agarwal, Stuart Anderson, Wenzhen Yuan IROS, 2022 paper / code |

|

Adapted from the template by Jon Barron.

|